Up and running in under 2 minutes.

./start-recallium.sh (macOS/Linux) or start-recallium.bat (Windows)Browser opens automatically at http://localhost:9001 → complete the Setup Wizard below.

Windows + Ollama? See firewall setup first.

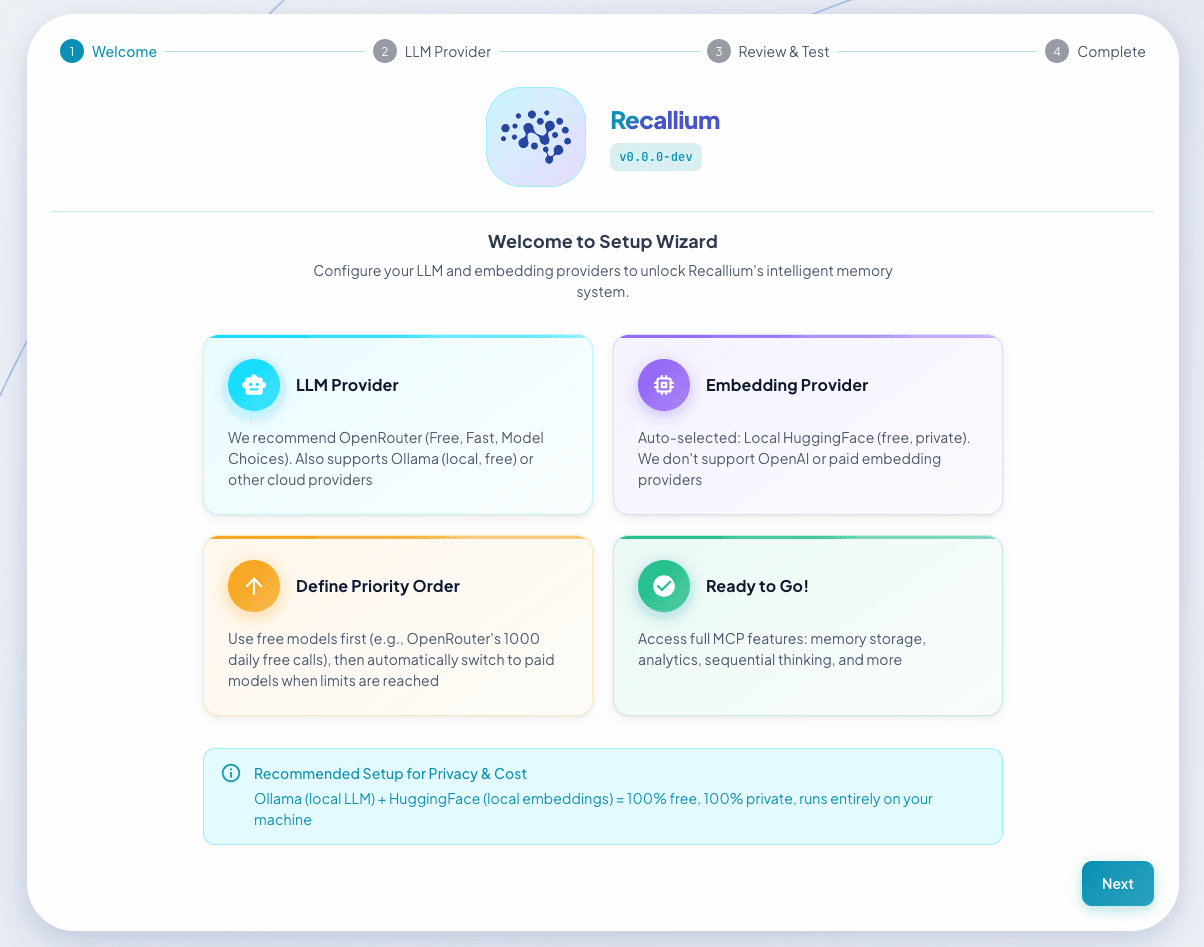

The Setup Wizard opens at http://localhost:9001:

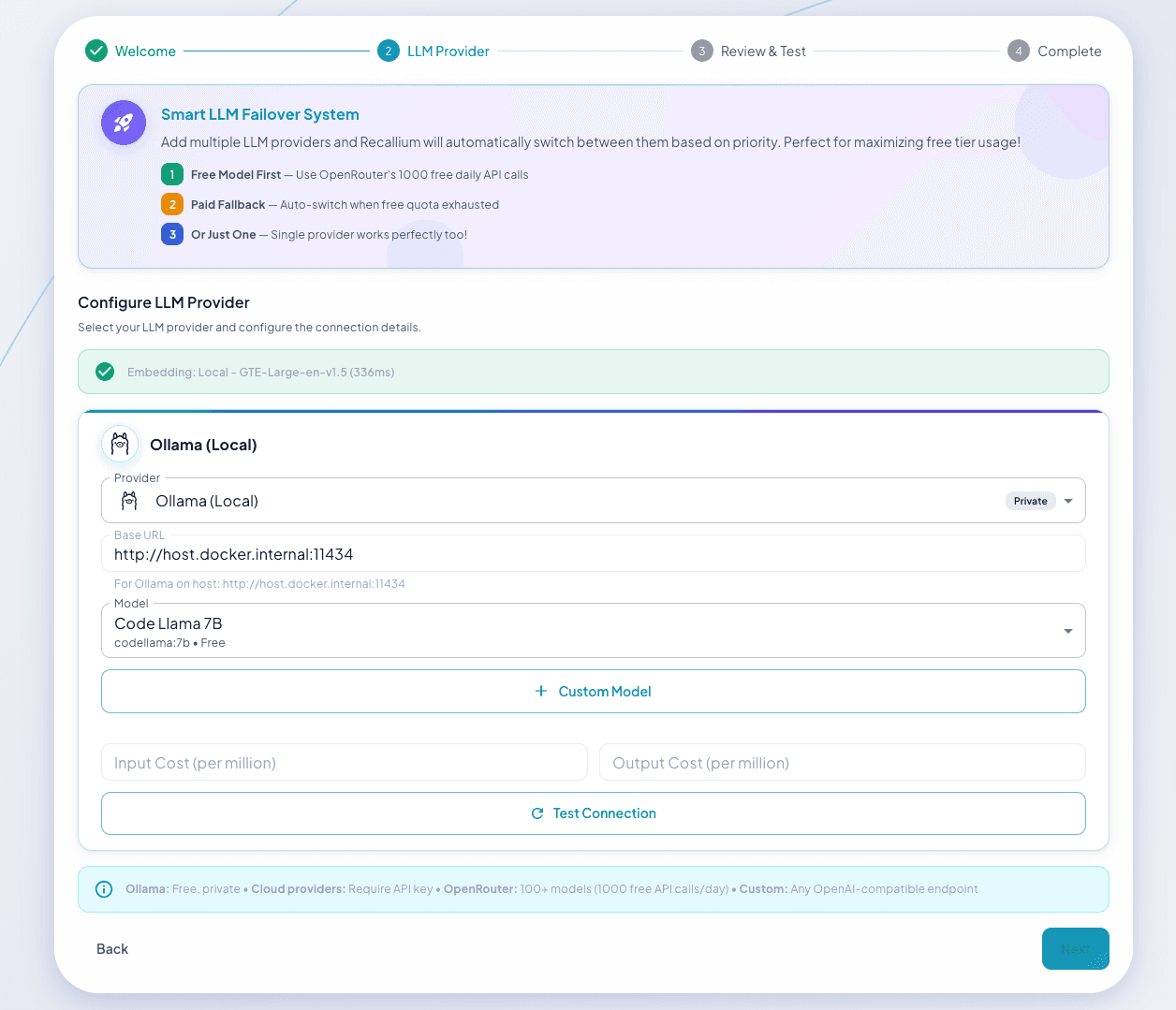

Test connection before adding a provider

Use existing API keys from major providers

| Provider | Models | Notes |

|---|---|---|

| Anthropic | Claude 3.5 Sonnet, Claude 3 Opus/Sonnet/Haiku | Recommended for best results |

| OpenAI | GPT-4o, GPT-4 Turbo, GPT-3.5 Turbo | Function calling, streaming, vision |

| Google Gemini | Gemini 1.5 Pro, Gemini 1.5 Flash | Multi-modal support |

| OpenRouter | 100+ models via single API | Access any model, unified billing |

Run completely offline, no API costs

| Provider | Models | Notes |

|---|---|---|

| Ollama | Llama 3, Mistral, Qwen, any local model | Free, private - runs entirely on your machine |

For corporate environments with compliance requirements

| Provider | Models | Notes |

|---|---|---|

| Palantir AIP | Models via Foundry platform | Enterprise-grade, compliance-ready |

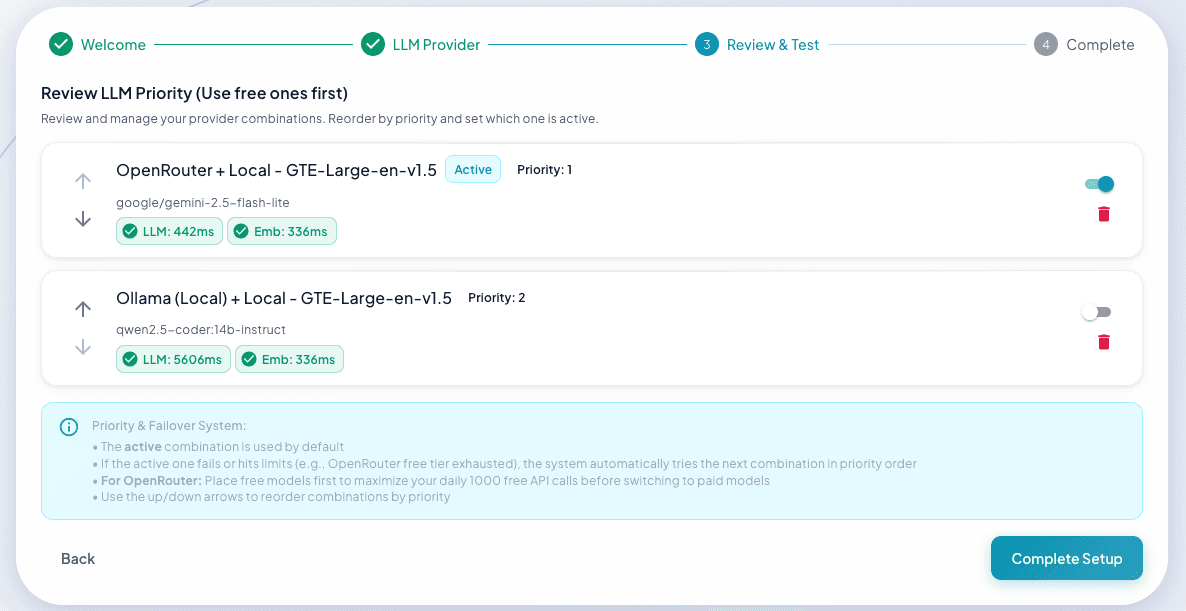

Configure provider priority for automatic failover

MCP tools are now available to all connected IDEs.

Go to: Settings → Cursor Settings → MCP → Add new global MCP server

{

"mcpServers": {

"recallium": {

"url": "http://localhost:8001/mcp",

"transport": "http"

}

}

}For Windsurf, JetBrains, Zed, Cline, and more, see the detailed installation guide.

MiniMe was the first version of this AI memory system. If you've been using MiniMe and want to bring all your memories into Recallium, the migration is simple:

.zip file)http://localhost:9001.zip file you exportedYour memories, projects, rules, and tasks will all be imported. You can continue right where you left off.